The Simulation That Failed

How a Tiny Worm Shattered Techno-Utopia

The Simulation That Failed: How a Tiny Worm Shattered Techno-Utopia

Jon Cogburn and Emily Beck Cogburn

We live in an age where some of the wealthiest and most influential people on the planet believe that death is a bug in the system rather than an inescapable fact of life. In their vision, our minds are computational patterns waiting to be copied, optimized, and then preserved indefinitely in digital substrates. The hype around the possibility of immortality through mind uploading was massive in the early 2000s, with projects like the Russian venture Project 2045 (referring to the supposed year that digital uploading of minds will be achieved) (Strategic Social Initiative 2018) and the startup company Nectome (Regalado 2018), which originally promised immortality through mind uploading and now pursues the more modest goal of researching memory (Nectome 2025). As reflected by Nectome’s shift, researchers are realizing that the goal of complete mind upload is not going to be achieved in the near future. Optimism has waned since 2009 when Henry Markram of the Blue Brain Project claimed that an entire simulation of the human brain would be possible within 10 years (Fildes 2009).

Still, many people hold out hope that someday digital immortality can be achieved. The extent of the techno-optimism is evidenced by the continuing popularity of cryogenics companies such as Tomorrow Bio which promises to freeze and preserve people until such time that the technology of mind uploading or some other kind of immortality technology is developed (Tomorrow Bio 2025). Neuroscientist Dobromir Rahnev says that although it might not happen for 200 years or so, mind uploading or artificial brains are very likely technologically possible (Rahnev 2025). Influential techno-futurists like Ray Kurzweil, Hans Moravec, and Nick Bostrom have been preaching the gospel of uploading and AI intelligence for years (Kurzweil 2005; Moravec 1988; Bostrom 2003). Even the philosopher David Chalmers stated in 2010 that human level AI might be possible by 2100 (Chalmers 2010). Billionaires are using their considerable funds to bet that these people are right (Dujmovic 2023).

On the other (which actually turns out to be the same) side, many Longtermists (people who believe that our ethical obligations are primarily to future generations), argue that one of our goals should be to protect the future of humanity from takeover by AI (Centre for Effective Altruism 2024). This has two different effects: first, to reinforce the idea that we should fear AI since it’s so powerful or potentially powerful, and second, to justify diverting resources away from helping people in need now toward mitigating this supposed future threat.

So the assumption of the techno-optimists and the longtermists is that someday AI will possess generalized intelligence on a level with human beings. But is this really possible?

Your Brain is not a Computer

Computational functionalism is the philosophical theory of mind that underlies the uploading fantasy. This is the view that minds are defined not by what they’re made of, but by what they do. That is, mental states are just functional roles in a system, and the brain is effectively a computer running software (Putnam 1967; Fodor 1975; Dennett 1991). From this perspective, uploading the mind is simply a matter of preserving the right kind of causal organization. If you get the functional structure right by copying the connectome, preserving the synaptic weights, and simulating the signaling, you have the mind. It doesn’t matter whether the substrate is carbon or silicon, flesh or code. What matters is the pattern.

This isn’t quite as simple as it sounds, or as uploading optimists make it out to be. As the philosopher David Chalmers points out, even if we accept that a digital upload of my mind is possible and that such an upload would be conscious (both assumptions that are highly questionable), it doesn’t necessarily follow that this upload would be me in a personal identity sense.

Some kind of connectivity between myself and the upload of my mind is needed in order for the upload to actually be me. Philosophers who work on issues of personal identity, however, disagree on what the nature of this connectivity would need to be. We should all be able to agree, however, that just any intelligent being we create, even if it resembles me in nearly every way, such as a twin, is not necessarily me. This is the difference between qualitative and numerical identity. If we want to preserve our own lives, then we need to create something that isn’t just like me (qualitative identity), but actually is me (numerical identity). Chalmers himself reserves judgment on whether this might be possible. (Chalmers 2010).

Uploading optimists then must argue not only that uploading is possible, and that the upload would be conscious or in some sense human, but also that personal identity would be preserved by the upload. If they can do this, then they can conclude that immortality is possible for those who can afford it. But this turns out to be a tall order. As we will argue in what follows, step one of the uploading optimists’ agenda, recreating human intelligence, is almost certainly impossible.

The Case of our Worm

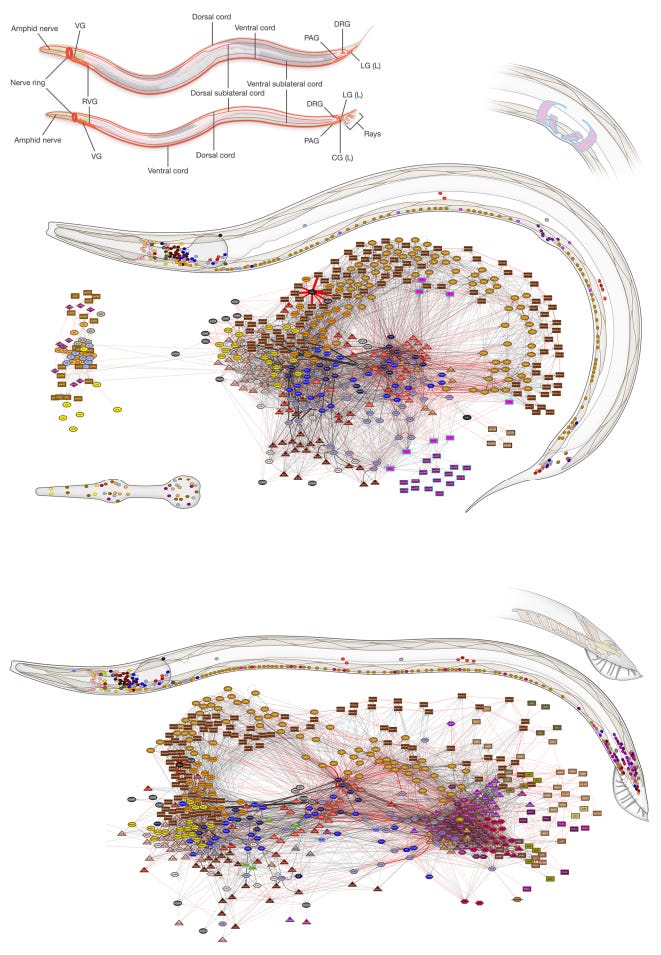

The best argument against uploading comes, not from a scientist or a philosopher, but from Caenorhabditis elegans, a millimeter-long nematode with just 302 neurons.

Scientists mapped the entire nervous system of this indefatigable hermaphrodite almost forty years ago (White et al. 1986). Thus, if structure alone were sufficient to reproduce behavior, we ought to know exactly how this creature operates. If any organism were simulable, it should be C. elegans. But the simulations fail. C. elegans does not cooperate. And in its refusal, it reveals that the metaphysics underwriting the dream of uploading may be, not just premature, but mistaken at its core.

Let’s pause now for the testimony of our worm friend. C. elegans is likely not conscious, at least not in any robust or introspectively accessible way. But it is behaviorally complex. It can navigate chemical gradients, adjust its movement based on hunger, and enter dormant states in response to environmental stress. It has no brain as such, only a small nervous system which contains, as noted above, 302 neurons and roughly 7,000 synapses. And, as we also mentioned, its full connectome has been known for decades (White et al. 1986). Unlike the human brain, its development is deterministic. Every hermaphroditic worm has the same neural layout. No messy individual variation. No hidden variables. In short, C. elegans is the ideal case for a reductionist theory of mind. Again, if mapping structure were enough to recover function, then this worm should be simulable.

And yet, it is not.

The OpenWorm project, begun in 2011, has brought together neuroscientists, computer scientists, and roboticists to simulate the worm’s nervous system, body, and environment. Because of the complexity of the factors causing and affecting the worm’s behavior, multiple kinds of models are needed to simulate different environments, micro and macro level properties of the worm, and chemical variations in the worm’s body and nervous system.

A number of properties of biological creatures makes this effort challenging. First, there’s developmental plasticity. Neurons do not arrive pre-programmed. Their functional roles emerge through use, modulation, and adaptation (Karmiloff-Smith 1992). Second, there’s embodiment. The nervous system is embedded in a body, and that body is situated in a world (Noë 2004; Chemero 2009). Proprioceptive feedback, hormonal states, and biomechanical constraints all shape what the nervous system does. Third, there’s environmental contingency. A worm in one context behaves differently than a worm in another, not because its connectome has changed, but because behavior is context-sensitive.

After years of study, researchers still don’t understand exactly which very specific factors (such as subcellular calcium signals and the chemical environment of the nervous system as a whole) affect the behavior of which neurons and then how those neurons then affect the behavior of the entire organism. Add to that environmental variation which also must be modeled separately as well as many other factors in some cases not well understood, and you end up with a number of models simulating different aspects of the worm in different situations.

Part of the Open Worm project is developing a system that will allow for integration of these different models, but various scientists, including Balázs Szigeti, have stated that a perfect or complete simulation of the worm is probably impossible (Szigeti et al. 2014). Constructing and using these specific kinds of models has deepened our understanding of neurology and biology, however it will not even result in a fully functional, complete simulation of the worm.

Why does this matter? Because if we cannot simulate the behavior of a 302-neuron worm with a fully mapped nervous system, then the idea that we could simulate the unmapped 86 billion neurons of the human brain starts to look like a metaphysical fantasy. This is especially true since the worm has taught us that simulating human behavior would not just mean mapping the brain, but also include replicating the human’s environment, chemical factors such as mentioned above in the nervous system, and a multitude of other variables that we cannot (at least as of now) understand or recreate.

Modeling Is Not Reproduction

A deeper error than just the failure to simulate the worm lies at the heart of the uploading dream: the conflation of modeling with reproduction. Scientists use models to understand complex systems. A good model abstracts key features to explain or predict behavior (Morgan and Morrison 1999; Cartwright 1983). But a model is not the thing it represents, something the people working on the open worm project understand. This is why simulating the worm it wasn’t really the goal. Because simulating the worm is impossible.

Uploading proponents regularly blur this line. They speak of “running” the mind on different hardware, as if the functional organization of consciousness were portable like an app. They assume that once the model is detailed enough, it becomes the thing itself. But this is not how biological systems work. As the philosopher John Searle points out, even though scientists completely understand how photosynthesis works, we cannot simulate this process to produce sugar, as plants can (Searle 1980).

This is not merely an epistemological issue. It is ontological. The worm is not a bundle of functional roles instantiated in hardware. It is a history, a process, a system embedded in a world. You can model aspects of that. But you cannot abstract it away and reinstantiate it elsewhere (Harman 2002; Chemero 2009).

Lessons of the Worm

We began this article with the idea of mind uploading as a way for billionaires to pursue their own immortality. The worm shows that is likely to be impossible. Even if Chalmers is right that a gradual replacement of the brain with synthetic parts would preserve personal identity, it is highly unlikely, and almost certainly not feasible, to in this way simulate a human being.

But, as of now, we have left the larger question of the possibility of generalized artificial intelligence unanswered. Maybe machines cannot emulate particular human beings, but does our worm show that artificial intelligence on a high, generalized level is impossible? In other words, does C. elegan show that we cannot we create beings that think like humans?

We think it does, and the argument should be fairly obvious by now. If we cannot simulate the worm, we cannot simulate humans either. But why think we can’t simulate human intelligence as a separate thing from human beings? Because that’s what AI is supposed to be. An intelligence equal to human intelligence (or better) that isn’t tied to a human being at all. We don’t need to emulate all human behavior, the AI apologist argues, to emulate human intelligence. In fact, the machines will be better than us since they won’t be burdened by emotion, irrationality, and all of the messy, unnecessary aspects of human behavior.

But, as it turns out, emotion and our bodies are essential, not expendable, parts of our intelligence. The way scientists are studying the worm shows this, if we just look closely. One clue is how intelligence is measured.

In the 1950s, Alan Turing proposed an intelligence test for AI that involved whether a person could tell if they were exchanging text messages with a computer or with another human. Since then, many people have decided that this test is not an accurate measurement of whether an artificial intelligence system possesses human-like general intelligence. And in proposing a Turing like test for the worm, Szigeti points out that its environment and biology must also be simulated in such a way that an expert could not tell the difference between the worm’s behavior and the simulations. This acknowledgement highlights that cognition cannot be separated from biology.

Given that humans are also biological creatures and psychologists and other scientists agree that our cognition is affected by our biology, environment, and other chemical and physical factors, there’s no reason to think that we could simulate or even measure human intelligence without taking these variables into account.

Moral and Political Stakes

The uploading dream is not just a misunderstanding of minds, but a politics of abstraction that empowers those who (with the assistance of trillions of dollars of government stimulus, contracts, and venture capital funding) speak in its name. The same ideology that insists your consciousness can be ported to the cloud also insists that personhood can be predicted, modeled, optimized, and even governed by algorithmic proxies. The simulation doesn’t have to work. It only has to structure investment, command authority, and deflect scrutiny.

This is where the worm matters most. Its refusal to be simulated punctures not only a scientific fantasy, but a worldview in which data and diagrams displace embodiment, history, and context. It reveals the limits of a techno-philosophical logic that equates representation with control and simplification with understanding.

The political consequences are not abstract. When predictive policing models treat communities as crime-producing input-output systems, they repeat the same epistemic sin as connectome functionalists: collapsing living systems into dead maps (Brantingham et al. 2018; Ferguson 2017; Lum and Isaac 2016). When AI alignment researchers propose that machine values can be extracted from human preferences, they echo the illusion that minds can be encoded and exported without remainder. When longtermist ideologues argue that the lives of future digital persons outweigh the moral claims of actual embodied humans today, they naturalize a metaphysics that has failed at the level of a worm.

Longtermism has already begun reshaping public discourse and institutional investment. Effective Altruist–aligned organizations such as Open Philanthropy and the Future of Humanity Institute have directed hundreds of millions of dollars toward speculative research on digital consciousness, artificial general intelligence, and x-risk mitigation.

They justify this expenditure of resources with abstract population ethics and the presumed moral weight of unborn, possibly simulated beings (Torres 2023; Gebru et al. 2021). In the UK and US, government partnerships with longtermist-affiliated institutions have begun channeling public research funds toward AI safety and brain emulation work. Meanwhile, actual social welfare infrastructure is being gutted. The speculative metaphysics of uploading becomes, in practice, a pretext for diverting resources from desperate present human need to an incredibly stupid digital eschatology (Crawford 2021).

This isn’t just bad theory. It’s dangerous, ecocidal nonsense. Just one example is the proposed AI data center in Wyoming that would use more electricity than all the houses in the state, with a potential to expand its size five-fold (Gruver and O’Brien 2025). We are destroying our planet with unattainable dreams of immortality and machine intelligence.

We should not be building policy, ethics, or existential risk mitigation strategies atop a worldview that cannot simulate the behavior of a creature with 302 neurons. The refusal of C. elegans to become a digital avatar is not an anomaly to be overcome; it is a revelation. Minds are not code. Life is not a spreadsheet. And if we’re going to save ourselves, our values, our societies, and the environment, we’d do much better to ignore science fiction in favor of real, present biological and ecological needs.

Coda: “Waiting for the Worms” — Pink Floyd.

Bibliography

Bostrom, Nick. 2003. “Are You Living in a Computer Simulation?” Philosophical Quarterly 53 (211): 243–255.

Brantingham, P. Jeffrey, Matthew Valasik, and George E. Tita. 2018. “Does Predictive Policing Lead to Biased Arrests? Results from a Randomized Controlled Trial.” Statistics and Public Policy 5 (1): 1–6.

Cartwright, Nancy. 1983. How the Laws of Physics Lie. Oxford: Clarendon Press.

Centre for Effective Altruism. 2024. “Longtermism.” Accessed July 25, 2025. https://www.centreforeffectivealtruism.org/longtermism

Chalmers, David J. 2010. “The Singularity: A Philosophical Analysis.” Journal of Consciousness Studies 17 (9–10): 7–65.

Chemero, Anthony. 2009. Radical Embodied Cognitive Science. Cambridge, MA: MIT Press.

Crawford, Kate. 2021. Atlas of AI: Politics, Power, and the Planetary Costs of Artificial Intelligence. New Haven: Yale University Press.

Dennett, Daniel C. 1991. Consciousness Explained. Boston: Little, Brown.

Dujmovic, Jurica. 2023. “This is your brain on AI: Powerful billionaires are pouring money into life extending technology and they just might succeed.” MarketWatch. Accessed July 25, 2025. https://www.marketwatch.com/story/upload-your-mind-or-alter-genetics-powerful-billionaires-are-pouring-money-into-life-extending-technology-and-they-just-might-succeed-6e1042f4

Ferguson, Andrew Guthrie. 2017. The Rise of Big Data Policing: Surveillance, Race, and the Future of Law Enforcement. New York: NYU Press.

Fildes, Jonathan. 2009. “Artificial brain 10 years away.” BBC news. http://news.bbc.co.uk/2/hi/8164060.stm.

Fodor, Jerry A. 1975. The Language of Thought. Cambridge, MA: Harvard University Press.

Gebru, Timnit, Emily M. Bender, Angelina McMillan-Major, and Shmargaret Shmitchell. 2021. “On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?” In Proceedings of the 2021 ACM Conference on Fairness, Accountability, and Transparency, 610–623.

Gruver, Mead, and Matt O’Brien. 2025. “Cheyenne to host massive AI data center using more electricity than all Wyoming homes combined.” APNews. Accessed August 2, 2025. https://apnews.com/article/ai-artificial-intelligence-data-center-electricity-wyoming-cheyenne-44da7974e2d942acd8bf003ebe2e855a

Harman, Graham. 2002. Tool-Being: Heidegger and the Metaphysics of Objects. Chicago: Open Court.

Karmiloff-Smith, Annette. 1992. Beyond Modularity: A Developmental Perspective on Cognitive Science. Cambridge, MA: MIT Press.

Kurzweil, Ray. 2005. The Singularity Is Near: When Humans Transcend Biology. New York: Viking.

Lum, Kristian, and William Isaac. 2016. “To Predict and Serve?” Significance 13 (5): 14–19.

Marder, Eve. 2015. “Understanding Brains: Details, Intuition, and Big Data.” PLOS Biology 13 (5): e1002147.

Moravec, Hans. 1988. Mind Children: The Future of Robot and Human Intelligence. Cambridge, MA: Harvard University Press.

Morgan, Mary S., and Margaret Morrison, eds. 1999. Models as Mediators: Perspectives on Natural and Social Science. Cambridge: Cambridge University Press.

Nectome. 2025. “About us.” https://nectome.com/about-us/. Accessed July 25, 2025.

Noë, Alva. 2004. Action in Perception. Cambridge, MA: MIT Press.

Parfit, Derek. 1984. Reasons and Persons. Oxford: Oxford University Press.

Putnam, Hilary. 1967. “Psychological Predicates.” In Art, Mind, and Religion, edited by William H. Capitan and Daniel D. Merrill, 37–48. Pittsburgh: University of Pittsburgh Press.

Rahnev, Dobromir. 2025. “Can you upload a human mind into a computer? A neuroscientist ponders what’s possible.” The Conversation. Accessed July 25, 2025. https://theconversation.com/can-you-upload-a-human-mind-into-a-computer-a-neuroscientist-ponders-whats-possible-250764

Regalado, Antonio. 2018. “A startup is pitching a mind uploading service that is 100% fatal.” MIT Technology Review. https://www.technologyreview.com/2018/03/13/144721/a-startup-is-pitching-a-mind-uploading-service-that-is-100-percent-fatal/. Accessed July 28, 2025.

Searle, John. 1980. “Minds, Brains, and Programs.” Behavioral and Brain Sciences 3: 417-457.

Sporns, Olaf. 2011. Networks of the Brain. Cambridge, MA: MIT Press.

Strategic Social Initiative. http://www.2045.com.

Szigeti, Balazs, Matteo Gleeson, Giovanni Idili, et al. 2014. “OpenWorm: An Open-Science Approach to Modeling C. elegans.” Frontiers in Computational Neuroscience 8: 137. https://www.frontiersin.org/journals/computational-neuroscience/articles/10.3389/fncom.2014.00137/full

Thompson, Evan. 2007. Mind in Life: Biology, Phenomenology, and the Sciences of Mind. Cambridge, MA: Harvard University Press.

Tomorrow Bio. 2025. “Introduction to Cryopreservation.” Accessed July 25, 2025. https://www.tomorrow.bio/us/introduction-to-cryopreservation

Varela, Francisco J., Evan Thompson, and Eleanor Rosch. 1991. The Embodied Mind: Cognitive Science and Human Experience. Cambridge, MA: MIT Press.

White, J. G., E. Southgate, J. N. Thomson, and S. Brenner. 1986. “The Structure of the Nervous System of the Nematode Caenorhabditis elegans.” Philosophical Transactions of the Royal Society B 314 (1165): 1–340.

Check this article: https://philpapers.org/references/ZHAIAN

https://www.amazon.com/Taxonomy-Metaphysics-Mind-Uploading-Keith-Wiley/dp/0692279849

Proponent of digital sentience: https://en.wikipedia.org/wiki/Nick_Bostrom

Tech bros as “saviors” with crazy theories: https://allanmlees59.medium.com/the-strange-self-referential-world-of-the-tech-bros-afabd8aef521

Computer simulation of mouse brain http://news.bbc.co.uk/2/hi/technology/6600965.stm

Dangers of longtermism https://aeon.co/essays/why-longtermism-is-the-worlds-most-dangerous-secular-credo

Arguments against uploading: https://medium.com/predict/the-flawed-logic-of-mind-uploading-475cda510a25

Human brain project https://www.nature.com/articles/d41586-023-02600-x